Alertmanger manger helps to Send the Notification for any of the Alert generated for your server to either, email, Slack, Hipchat, etc. It is Configured from the command line and has certain configuration files.

In one of the Previous blog, we have seen step by step process of setup and configurations of Prometheus, Node Exporter, and CAdvisor. We will be using the same docker compose file and will be appending it with the Alert manager Service. You Can refer the Previous blog here. We will be doing the following,

1. Appending the Alert Manager Service in the Docker compose file

2. Writing an alertmanager.yml configuration file which will have all the necessary details of the receiver, like slack channel, api_url, Aler Message, etc.

3. Writing alert.rule file: Here we will be defining our alerts expression/conditions and defining the threshold, So once the Server Statistics is identified beyond this, the Alarm will be triggered to the Slack.

Let’s get Started with the Steps now,

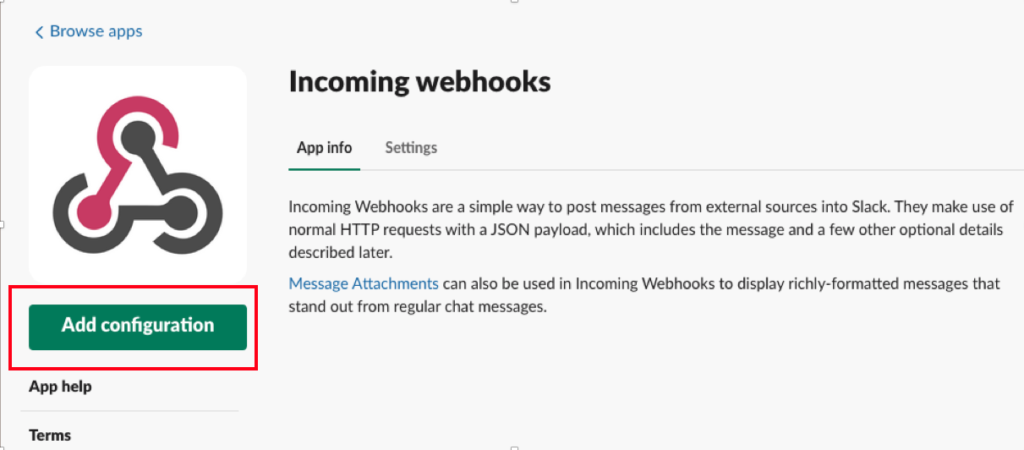

You need to have a slack account, If not Just make it from the Slack Website. Create a channel of your preferred name. Now once the channel is created we have to Create an Incoming Webhook for that, Open below link,

https://your_workspace.slack.com/apps

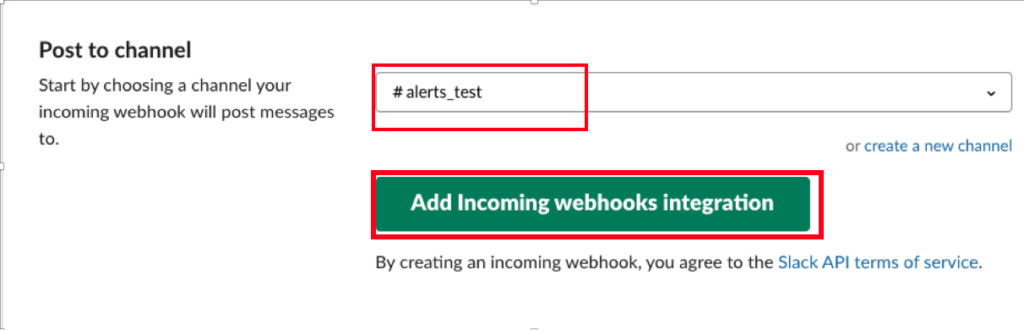

Now, Select the channel from the dropdown list where you wanted to post your Alert Notification and Click Add incoming Webhooks integrations.

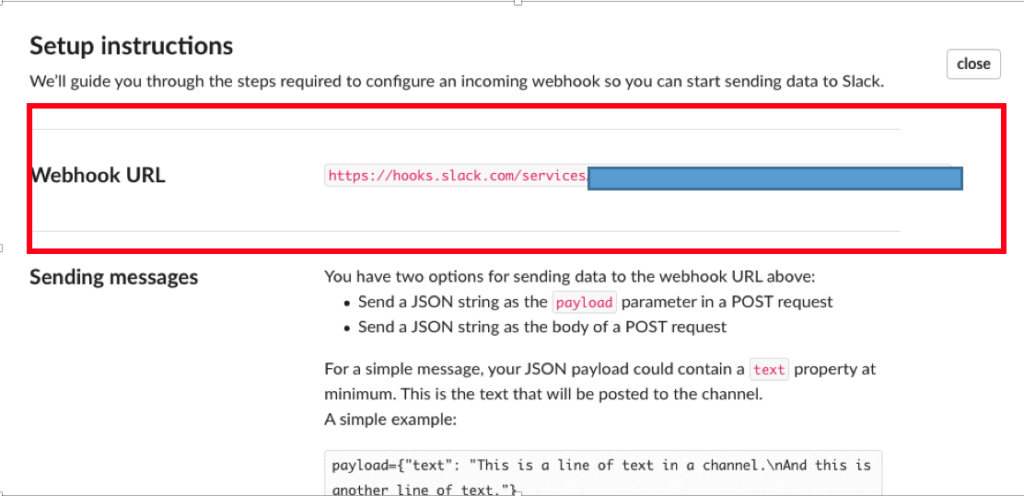

Once you Clicked on Add incoming Webhooks integrations, You will get your Webhook Url which you need to use in order to push Notification to that particular channel. There will also be a Setup Instructions.

Just make a copy of webhook Url to be used in the alertmanager.yml file. After you add the Integration, there will also be a notification you’ll receive in the channel, “added integration to this channel: “

Now, Let’s Spin up the Alert Manager Container, Add below piece of yaml code in the docker compose file. Get the Complete set of code from the devopsage official repository.

alertmanager:

image: prom/alertmanager:latest

volumes:

- ./alertmanager.yml:/alertmanager.yml

command:

- '--config.file=/alertmanager.yml'

ports:

- '9093:9093'

Now Create a file named, alertmanager.yml and paste the below yaml content.

route:

group_by: [cluster]

receiver: alerts-test

routes:

match:

severity: slack

receiver: alerts-test

receivers:

name: alerts-test

slack_configs:

api_url: https://hooks.slack.com/services/xxxxxx ##webhook url

channel: '#alerts_test'

icon_url: https://avatars3.githubusercontent.com/u/3380462

send_resolved: true

text: " \nsummary: {{ .CommonAnnotations.summary }}\ndescription: {{ .CommonAnnotations.description }}

Append prometheus.yml file and paste the below yml code at the last.

alerting:

alertmanagers:

- scheme: http

static_configs:

- targets:

- "10.0.3.192:9093"

Eventually, create Alert rule now, A sample Alert Rule you can find it from the DevOpsAge Github Repository. Entire Alert along with the threshold need to written in alert.rules. Based on your requirement it could be very lengthy. A sample format is as below,

groups:

- name: targets

rules:

- alert: monitor_service_down

expr: up == 0

for: 30s

labels:

severity: critical

annotations:

summary: "Monitor service non-operational"

description: "Service {{ $labels.instance }} is down."

- name: containers

rules:

- alert: cadvisor_container_down

expr: absent(container_memory_usage_bytes{name="monitoring_cadvisor_1"})

for: 30s

labels:

severity: critical

annotations:

summary: "CAdvisor container down"

description: "Cadvisor container is down for more than 30 seconds."

The last thing we have to do is to add the rule_file to the Prometheus Configuration file. Add below piece of code to the prometheus.yml. Check here for reference.

rule_files: - 'alert.rules'

Also, don’t forget to make changes to the docker compose file for the Prometheus service under the volume section. Add,

– ./alert.rules:/etc/prometheus/alert.rules

Check the complete docker compose file here for your reference.

Now, recreate the entire containers from the docker container file and you should be good to go.

docker-compose -f docker-compose-mon.yml down docker-compose -f docker-compose-mon.yml up -d

Verify, whether all the containers are running or not, If by any chance any container fails or get exited, just check the logs and try to troubleshoot it.

docker logs container_id

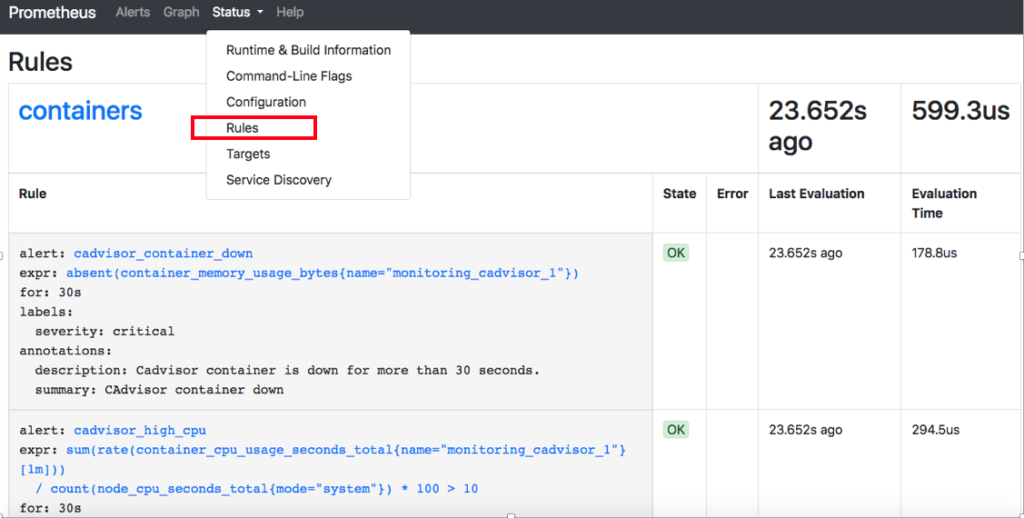

Now check whether the rules are set and configured correctly or not. Just open Prometheus (Ip:9090)

From the Status tab Click Rules and you will be able to see the alert rules which you have set as shown in the screenshot below

Now, Intentionally bring down the CAdvisor Container to check whether it identifies the Alert and Sends Notification to the Slack Channel or Not.

Stop CAdvisor Container.

docker stop monitoring_cadvisor_1

Wait for Some time, and If you configured everything correctly then you will get Notified to Slack for Sure.

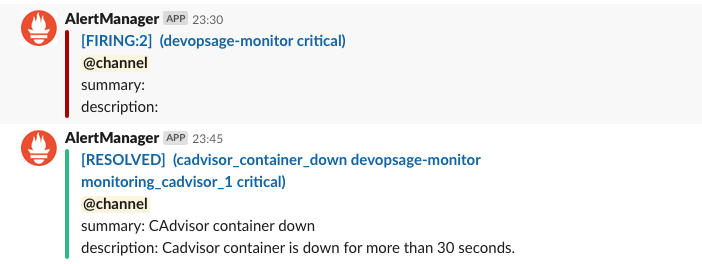

Here, We are getting Alert Notification first on Slack Channel and Once We start the Container Again We will be notified as Resolved.

Here once the Alert is firing, we can not see any Summary and Description, It’s Probably because we have stopped the CAdvisor Container itself.

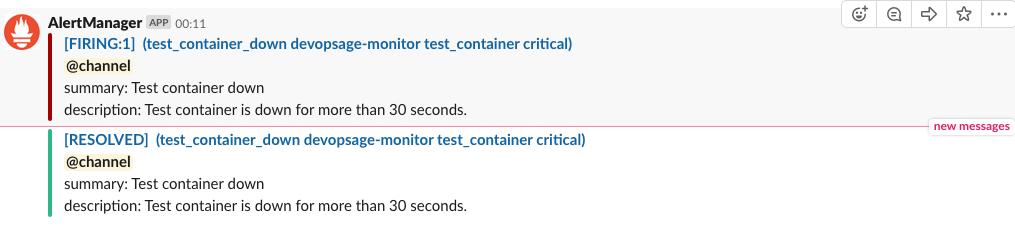

Let’s test It With a test container and see whether the notification comes along with the Summary and Description or not.

So We Can See that the Notification Comes with the Summary and Description for the Test Container.

Alert may take up to 5 mins to Notify, So just have some patience.

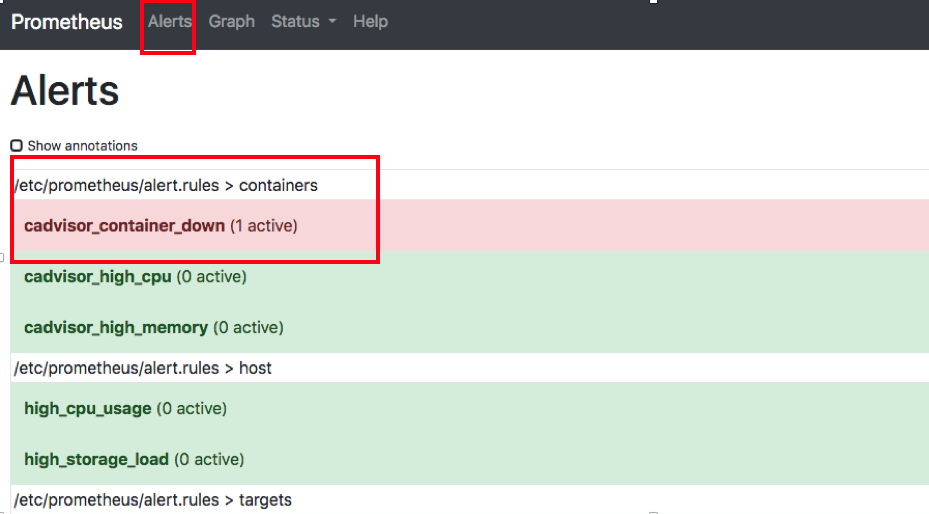

Also, Check the Alert Section in the Prometheus Dashboard.

So here we can see that the Alert is in firing mode. That’s all for this particular blog, do some hands-on and test.

If you Like Our Content here at Devopsage, then please support us by sharing this post.

Please Like and follow us at, LinkedIn, Facebook, Twitter, and GitHub

Also, Please comment on the post with your views and let us know if any changes need to be done.

Thanks!!

You May Also need to look into,

Monitoring Servers and Docker Containers using Prometheus and Grafana.

Creating Dashboard in Grafana

How to Add Nodes to be Monitored in Grafana